Third edition of Artificial Intelligence: foundations of computational agents, Cambridge University Press, 2023 is now available (including the full text).

9.5.5 Dynamic Decision Networks

The MDP is a state-based representation. In this section, we consider a feature-based extension of MDPs, which forms the basis for what is known as decision-theoretic planning.

The representation of a dynamic decision network (DDN) can be seen in a number of different ways:

- a factored representation of MDPs, where the states are described in terms of features;

- an extension of decision networks to allow repeated structure for ongoing actions and state changes;

- an extension of dynamic belief networks to include actions and rewards; and

- an extension of the feature-based representation of actions to allow for uncertainty in the effect of actions.

A fully observable dynamic decision network consists of

- a set of state features, each with a domain;

- a set of possible actions forming a decision node A, with domain the set of actions;

- a two-stage belief network with an action node A, nodes F0 and F1 for each feature F (for the features at time 0 and time 1, respectively), and a conditional probability P(F1|parents(F1)) such that the parents of F1 can include A and features at times 0 and 1 as long as the resulting network is acyclic; and

- a reward function that can be a function of the action and any of the features at times 0 or 1.

As in a dynamic belief network, the features at time 1 can be replicated for each subsequent time.

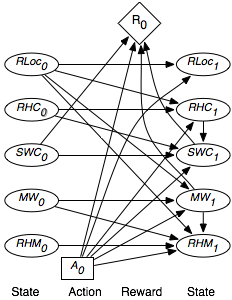

The parents of RLoc1 are Rloc0 and A. The parents of RHC1 are RHC0, A, and RLoc0; whether the robot has coffee depends on whether it had coffee before, what action it performed, and its location.

The parents of SWC1 include SWC0, RHC0, A, and RLoc0. You would not expect RHC1 and SWC1 to be independent because they both depend on whether or not the coffee was successfully delivered. This could be modeled by having one be a parent of the other. The two-stage belief network of how the state variables at time 1 depends on the action and the other state variables is shown in Figure 9.17. This figure also shows the reward as a function of the action, whether Sam stopped wanting coffee, and whether there is mail waiting.

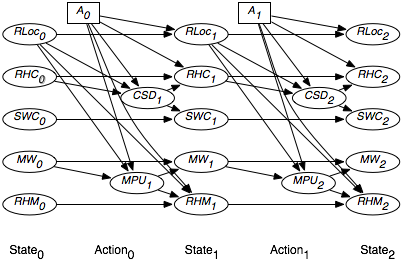

An alternative way to model the dependence between RHC1 and SWC1 is to introduce a new variable, CSD1, which represents whether coffee was successfully delivered at time 1. This variable is a parent of both RHC1 and SWC1. Whether Sam wants coffee is a function of whether Sam wanted coffee before and whether coffee was successfully delivered. Whether the robot has coffee depends on the action and the location, to model the robot picking up coffee. Similarly, the dependence between MW1 and RHM1 can be modeled by introducing a variable MPU1, which represents whether the mail was successfully picked up. The resulting DDN replicated to a horizon of 2, but omitting the reward, is shown in Figure 9.18.

As part of such a decision network, we should also model the information available to the actions and the rewards. In a fully observable dynamic decision network, the parents of the action are all the previous state variables. Because this can be inferred, the arcs are typically not explicitly drawn. If the reward comes only at the end, variable elimination for decision networks, shown in Figure 9.11, can be applied directly. Note that we do not require the no-forgetting condition for this to work; the fully observable condition suffices. If rewards are accrued at each time step, the algorithm must be augmented to allow for the addition of rewards. See Exercise 9.12.