Artificial

Intelligence 2E

foundations of computational agents

The third edition of Artificial Intelligence: foundations of computational agents, Cambridge University Press, 2023 is now available (including full text).

3.2 State Spaces

Dimensions: flat, states, indefinite horizon, fully observable, deterministic, goal directed, non-learning, single agent, offline, perfect rationality

One general formulation of intelligent action is in terms of a state space. A state contains all of the information necessary to predict the effects of an action and to determine whether a state satisfies the goal. State-space searching assumes:

-

•

The agent has perfect knowledge of the state space and is planning for the case where it observes what state it is in: there is full observability.

-

•

The agent has a set of actions that have known deterministic effects.

-

•

The agent can determine whether a state satisfies the goal.

A solution is a sequence of actions that will get the agent from its current state to a state that satisfies the goal.

Example 3.2.

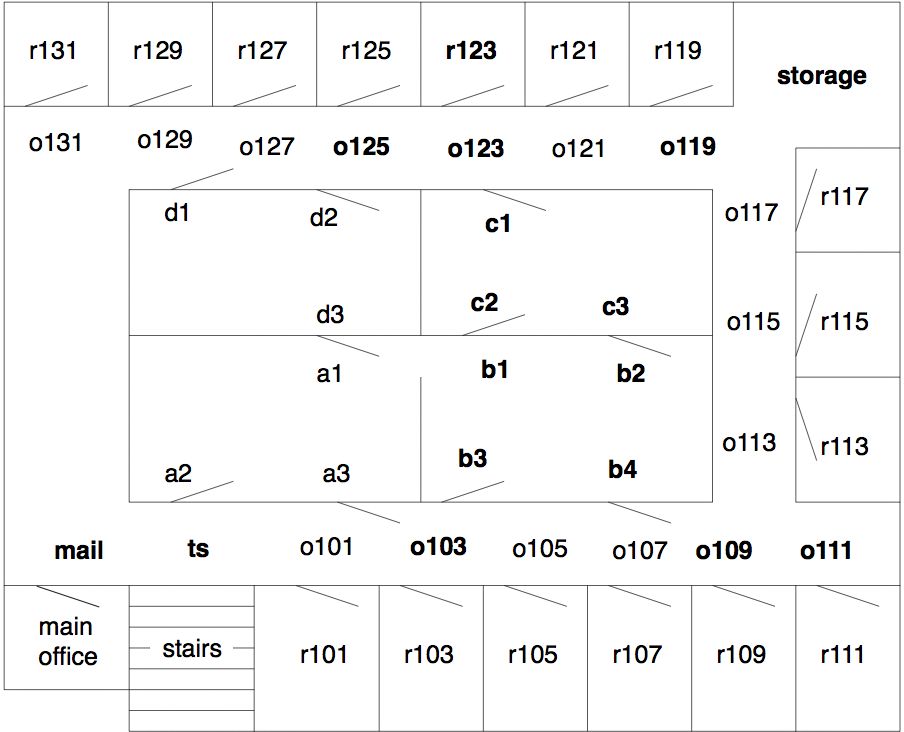

Consider the robot delivery domain of Figure 3.1, where the only way a robot can get through a doorway is to push the door open in the direction shown. The task is to find a path from one location to another. Assuming that the agent can use a lower-level controller to get from one location to a neighboring location, the actions can involve deterministic traveling between neighboring locations. This can be modeled as a state-space search problem, where the states are locations.

Consider an example problem with the robot outside room , at position , and the goal is to get to room . Thus, is the only state that satisfies the goal. A solution is a sequence of actions that moves the robot from to room .

Example 3.3.

In a more complicated example, the delivery robot may have a number of parcels to deliver to various locations, where each parcel has its own delivery destination. In this case, the state may consist of the location of the robot, which parcels the robot is carrying, and the locations of the other parcels. The possible actions may be for the robot to move, to pick up parcels that are at the same location as the robot, or to put down some or all of the parcels it is carrying. A goal state may be one in which some specified parcels are at their desired locations. There may be many goal states because we may not care where the robot is or where some of the other parcels are in a goal state.

Notice that this representation has ignored many details, for example, how the robot is carrying the parcels (which may affect whether it can carry other parcels), the battery level of the robot, whether the parcels are fragile or damaged, and the color of the floor. By not having these as part of the state space, we assume that those details are not relevant to the problem at hand.

Example 3.4.

In a tutoring system, a state may consist of the set of topics that the student knows. The action may be teaching a particular lesson, and the result of a teaching action may be that a student knows the topic of the lesson as long as the student knows the topics that are prerequisites for the lesson being taught. The aim is for a student to know a particular set of topics.

If the effect of teaching also depends on the aptitude of the student, this detail must be part of the state space as well. We do not have to model what the student is carrying if that does not affect the result of actions or whether the goal is achieved.

A state-space problem consists of

-

•

a set of states

-

•

a distinguished state called the start state

-

•

for each state, a set of actions available to the agent in that state

-

•

an action function that, given a state and an action, returns a new state

-

•

a goal specified as a Boolean function, , that is true when state satisfies the goal, in which case we say that is a goal state

-

•

a criterion that specifies the quality of an acceptable solution. For example, any sequence of actions that gets the agent to the goal state may be acceptable, or there may be costs associated with actions and the agent may be required to find a sequence that has minimal total cost. A solution that is best according to some criterion is called an optimal solution. We do not always need an optimal solution, for example, we may be satisfied with any solution that is within 10% of optimal.

This framework is extended in subsequent chapters to include cases where the states have structure that can be exploited, where the state is not fully observable (e.g., the robot does not know where the parcels are initially, or the teacher does not know the aptitude of the student), where the actions are stochastic (e.g., the robot may overshoot, or a student perhaps does not learn a topic that is taught), and with complex preferences in terms of rewards and punishments, not just a set of goal states.